We know that Microsoft has integrated Python in SQL Server 2017 to enable rich data analytics capabilities within the database itself. Python is one of the most powerful languages which provides lots of built-in libraries for advanced data analytics and transformations. We can use Python for almost everything from website development to robotics and Data Science. In SQL Server 2017, Python can be used primarily for Machine learning purposes but it is not limited to that only. We can also use Python for complex data transformations and analysis which might be a bit tedious and complex while doing the same using T-SQL in SQL Server.

In this post, we will be exploring an use case example of Python for data transformation in SQL Server 2017. If you want to read more about Python and how to use it in SQL Server, you can visit my previous blog post “Why Python and how to use it in SQL Server 2017“. If you want to explore new features of SQL Server 2017, visit this post “SQL Server 2017 New Features“. To know, why you should learn Python, read this stack overflow article “The Incredible Growth of Python“. Now, let’s continue with the Python use case example.

Python use case – Map unequal comma separated values from two columns

In this example, assume that we have a table named “tbl_EmpProjectManagerDetail” with 3 columns which are “EmpName“, “CommaSepProjectNames“, and “CommaSepManagerNames“. Below is the T-SQL script to create the table and to add some sample data in it.

CREATE TABLE dbo.tbl_EmpProjectManagerDetail

(

EmpName varchar(256),

CommaSepProjectNames varchar(max),

CommaSepManagerNames varchar(max),

)

GO

INSERT INTO dbo.tbl_EmpProjectManagerDetail

(EmpName, CommaSepProjectNames, CommaSepManagerNames)

VALUES

('John', 'ERP,HR Assistant,Admin Helper', 'Kevin,John,Alen'),

('David', 'HR Assistant,Admin Helper,Airline Management,Traffic Controller,Travel Solutions', 'John,Alen,James,Hedge'),

('Steve', 'Power Management,School Management,One touch Attendance system', 'Stuart,Finch,Broad,Clark')

GO

Let’s have a look at the table data which is as below:

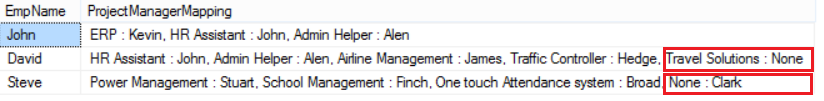

The column EmpName contains the name of employees, CommaSepProjectNames column contains the comma-separated names of projects in an ordered way in which the employee has worked so far, and CommaSepManagerNames column contains the comma-separated names of the managers for these projects in an ordered way. However, the comma-separated list of projects and managers can be unequal, and in this case, the last values from any of these columns need to be mapped to “None” value in the output instead of discarding these values. The final output will have two columns as “EmpName“, and “ProjectManagerMapping“. The column “EmpName” will have the name of the employees and the column “ProjectManagerMapping” will have the list of projects and managers mapped with each other in the order they are recorded in the columns “CommaSepProjectNames” and “CommaSepManagerNames“. Also, in case of unequal length, we need to map the last values from any of these columns with None value. Below is the screenshot of the required output:

In above image, we can see that in the column “ProjectManagerMapping“, for the employee “David”, no manager has defined for the project “Travel Solutions” (Highlighted in red rectangle in the second row). Also, for the employee “Steve”, no project name has defined for manager “Clark” (Highlighted in red rectangle in the third row). Now, let’s talk about the solution which we are going to implement using Python script which will execute in-database using inbuilt system stored procedure “sp_execute_external_script”.

Map unequal comma separated values from two columns using Python Script in SQL Server 2017

Undoubtedly, we can achieve the above output using T-SQL code in a set based way. However, the purpose of this article is to explore an use case scenario of Python’s data transformation capability which can be used in SQL Server 2017. Also, we are not going to compare the performance of achieving this output using T-SQL or using an in-database Python script. If you want to go through the set based approach techniques in SQL Server, you can visit my video tutorial series which explains how to think in sets in SQL Server.

Let’s have a look at the code being used to achieve the above output using Python script in SQL Server:

EXEC sp_execute_external_script

@language = N'Python',

@script =

N'

tblData = InputDataSet #Reads the input data in a data frame received from SQL Server

from itertools import zip_longest

import pandas as pd

lst = []

for pair, emp in zip(zip(tblData.CommaSepProjectNames.str.split(","), tblData.CommaSepManagerNames.str.split(",")), tblData.EmpName):

lst.append([emp, str(list(" : ".join(t) for t in (list(zip_longest(pair[0], pair[1], fillvalue="None"))))).lstrip("[").rstrip("]").replace("''", "")])

OutputDataSet = pd.DataFrame(lst)

',

@input_data_1 = N'SELECT EmpName, CommaSepProjectNames, CommaSepManagerNames FROM dbo.tbl_EmpProjectManagerDetail'

WITH RESULT SETS(("EmpName" varchar(256), "ProjectManagerMapping" varchar(max)))

How it is working

Let’s discuss the above code in more detail. In the above code, we are using stored procedure “sp_execute_external_script” to execute an external Python script in SQL Server. We are inputting the data from the table “tbl_EmpProjectManagerDetail” to the Python script using “@input_data_1” parameter of the procedure “sp_execute_external_script”. The @script parameter contains the actual Python code to transform the input data into the required output. Let’s talk about the Python code which we are using above.

Python provides a function named zip_longest which resides inside the itertools module and can be used to map values from two iterables considering the longest one. In our Python script, in the first line, we are assigning the input dataframe to a variable named tblData using below line:

tblData = InputDataSet

Then, we are importing the required modules using below lines of code:

from itertools import zip_longest import pandas as pd

After that, we are creating an empty list because we know that a list is a mutable object which allows modifying the data in place. In this way, a list will help us to improve the performance of the execution of the code.

lst = []

Then, we are using a for loop (which can also be converted into a list comprehension which can outperform the for loop) to transform the data as below.

for pair, emp in zip(zip(tblData.CommaSepProjectNames.str.split(","), tblData.CommaSepManagerNames.str.split(",")), tblData.EmpName):

lst.append([emp, str(list(" : ".join(t) for t in (list(zip_longest(pair[0], pair[1], fillvalue="None"))))).lstrip("[").rstrip("]").replace("''", "")])

In the above line of code, first, we are combining the values from both of the columns (CommaSepProjectNames, CommaSepManagerNames) using zip function as “zip(tblData.CommaSepProjectNames.str.split(“,”), tblData.CommaSepManagerNames.str.split(“,”))“. After that, we are adding employee names along with the concatenated values of these two columns using an outer zip function as “zip(zip(tblData.CommaSepProjectNames.str.split(“,”), tblData.CommaSepManagerNames.str.split(“,”)), tblData.EmpName)“. Then, we are assigning the output of the outer zip into two variables named “pair, and emp”. Next, we are using a for loop to iterate over for each row of the dataframe. Then inside the loop, we are using “lst.append([emp, str(list(” : “.join(t) for t in (list(zip_longest(pair[0], pair[1], fillvalue=”None”))))).lstrip(“[“).rstrip(“]”).replace(“””, “”)])” to map values from both the columns considering the longest with a filler value “None” for the unmapped values followed by a conversion of the elements of the list into string using join method with some cleanup activity, finally, these values gets appended into the list.

Now, let’s talk quickly about the performance summary of the above code.

Quick Performance Summary

Though I have not compared the performance of the above code with T-SQL set based code. But, in a quick testing, I found that this code is running quite faster than expected. Below is the summary of the quick test. Please note that the execution time is an average of multiple executions.

I will recommend you to perform extensive testing of the above code before moving it to the production environment.

Thanks for the reading. Please share your the input in the comment section of the post.

Looking at the code you posted for the 1000 row test, I noticed that they are all balanced with the same number of ProjectNames as ManagerNames. How does that test your unbalanced Projects to Managers?

Hi Lynn,

Thanks for your feedback.

However, the sample dataset I have used is not balanced which you can see in the below image:

The data which I have generated for 1000 records are from the same base data. I have tried to make sure that each row is unique. Even if you run the sample code, you will find that the data is not evenly balanced (for managers and projects) for these 1000 rows.

This is a very cool post, Gopal. It solves an (unfortunately) common problem that we all have to contend with at one time or another. Your explanation as to how it works is great (although, not being a Python user, still have no clue as to what “pandas” is). The other thing is that you’ve done what a whole lot of people either don’t know how to do or forget to do and that’s a performance test.

On that note, I would be very interested in knowing what the larger tests consisted of for row content. For example, did you generate 1,000 rows for the 1,000 row test by simply duplicating the original 3 rows that you used as an example or where all 1,000 rows unique? As we’ve seen in doing such things as “splits” in T-SQL, it can make a huge difference in performance with the duplication of rows incorrectly showing performance because of the 3 row statistics that SQL Server contrives for the data.

Again, well done and keep doing what you do!

Hi Jeff,

Its great to hear back from you.

Thank you for going through this article and sharing your valuable inputs.

The above tests were performed on unique row sets. Here I am attaching the sample code which I used for testing purposes. This script generates 1000 unique rows and then executes the Python script on this data. It takes the same time as given.

IF OBJECT_ID(‘dbo.tbl_EmpProjectManagerDetail’) IS NOT NULL

DROP TABLE dbo.tbl_EmpProjectManagerDetail

GO

CREATE TABLE dbo.tbl_EmpProjectManagerDetail

(

EmpName varchar(256),

CommaSepProjectNames varchar(max),

CommaSepManagerNames varchar(max),

)

GO

INSERT INTO dbo.tbl_EmpProjectManagerDetail

(EmpName, CommaSepProjectNames, CommaSepManagerNames)

VALUES

(‘John’, ‘ERP,HR Assistant,Admin Helper’, ‘Kevin,John,Alen’),

(‘David’, ‘HR Assistant,Admin Helper,Airline Management,Traffic Controller,Travel Solutions’, ‘John,Alen,James,Hedge’),

(‘Steve’, ‘Power Management,School Management,One touch Attendance system’, ‘Stuart,Finch,Broad,Clark’),

(‘Albert’, ‘ERP,HR Assistant’, ‘Kevin,John’),

(‘Adam’, ‘School Management,One touch Attendance system,Power Management’, ‘Finch,Broad,Stuart,Clark’),

(‘Smith’, ‘Admin Helper,Airline Management,Traffic Controller,Travel Solutions,HR Assistant’, ‘Alen,James,Hedge,John’),

(‘Polluck’, ‘Power Management,ERP,HR Assistant’, ‘Stuart,Kevin,John’),

(‘William’, ‘One touch Attendance system,Power Management,School Management’, ‘Broad,Stuart,Finch,Clark’),

(‘Anderson’, ‘Airline Management,Traffic Controller,Travel Solutions,HR Assistant,Admin Helper’, ‘James,Hedge,John,Alen’),

(‘Jim’, ‘ERP,HR Assistant,Power Management’, ‘Kevin,John,Stuart’)

GO

IF OBJECT_ID(‘tempdb..#test’) IS NOT NULL

DROP TABLE #test

GO

SELECT

DT.Seq,

DT.EmpName + CAST(DT.Seq AS VARCHAR(5)) + ‘_’ + REPLICATE(CHAR(65 + (ABS(CHECKSUM(NEWID())) % 26)), 5) AS EmpName,

REPLACE(DT.CommaSepProjectNames, ‘,’, ‘,’ + CAST(DT.Seq AS VARCHAR(5))) AS CommaSepProjectNames,

REPLACE(DT.CommaSepManagerNames, ‘,’, ‘,’ + CAST(DT.Seq AS VARCHAR(5))) AS CommaSepManagerNames

INTO #test

FROM

(

SELECT ROW_NUMBER() OVER(ORDER BY (SELECT NULL)) AS Seq, A.*

FROM dbo.tbl_EmpProjectManagerDetail A

CROSS JOIN dbo.tbl_EmpProjectManagerDetail B

CROSS JOIN dbo.tbl_EmpProjectManagerDetail C

) DT

–select * from #test order by seq

EXEC sp_execute_external_script

@language = N’Python’,

@script =

N’

tblData = InputDataSet #Reads the input data in a data frame received from SQL Server

from itertools import zip_longest

import pandas as pd

lst = []

for pair, emp in zip(zip(tblData.CommaSepProjectNames.str.split(“,”), tblData.CommaSepManagerNames.str.split(“,”)), tblData.EmpName):

lst.append([emp, str(list(” : “.join(t) for t in (list(zip_longest(pair[0], pair[1], fillvalue=”None”))))).lstrip(“[“).rstrip(“]”).replace(“””, “”)])

OutputDataSet = pd.DataFrame(lst)

‘,

@input_data_1 = N’SELECT EmpName, CommaSepProjectNames, CommaSepManagerNames FROM #test’

WITH RESULT SETS((“EmpName” varchar(256), “ProjectManagerMapping” varchar(max)))